Andy McLoughlin

To the edge (again!) — welcoming Mirai to the Uncork portfolio

Sometimes you can nail the thesis but be — counts fingers — eight years too early…

Back in early 2018 I invested in a startup called Fritz which was building a developer platform for machine learning inference at the edge. Great concept, superb team but ultimately a few years too early — there simply weren’t enough high value use cases to build a venture-scale business. Turns out powering Snapchat lenses does not a real business make. We ultimately sold the company to Spotify where the technology continues to power some of their clever on-device features like DJ.

Fast forward eight years and the world looks very, very different. Artificial intelligence and machine learning went from a specialized discipline that excelled in a few use cases to the very core of how every software business is building (or re-inventing) itself.

The arrival of developer-ready LLMs created a Cambrian explosion of new technologies. Markets that were typically deemed closed to new entrants were all-of-a-sudden wide open again. New modalities like voice and video became common. Multi-billion dollar (paper) valuations for startups which provided the inference infrastructure to prompt the underlying cloud-based LLMs were not uncommon.

However, the dirty truth that most people gloss over (while everything remains up and to the right) is that cloud inference costs money. Lots of money. And companies are spending on tokens like drunken sailors on shore leave. For now, VCs are happy to continue funding the rocketship companies spending inordinate sums on cloud inference. But that won’t last — at some point people will focus on the underlying economics of these businesses and realize that something has to change.

The other big difference between 2018 and 2026 is the quality of the supercomputers that live in our pockets, on our desks, in our cars and TVs. Not long ago it would have been difficult to comprehend running an LLM (even a pared-down version) on a phone but today this is very much a reality.

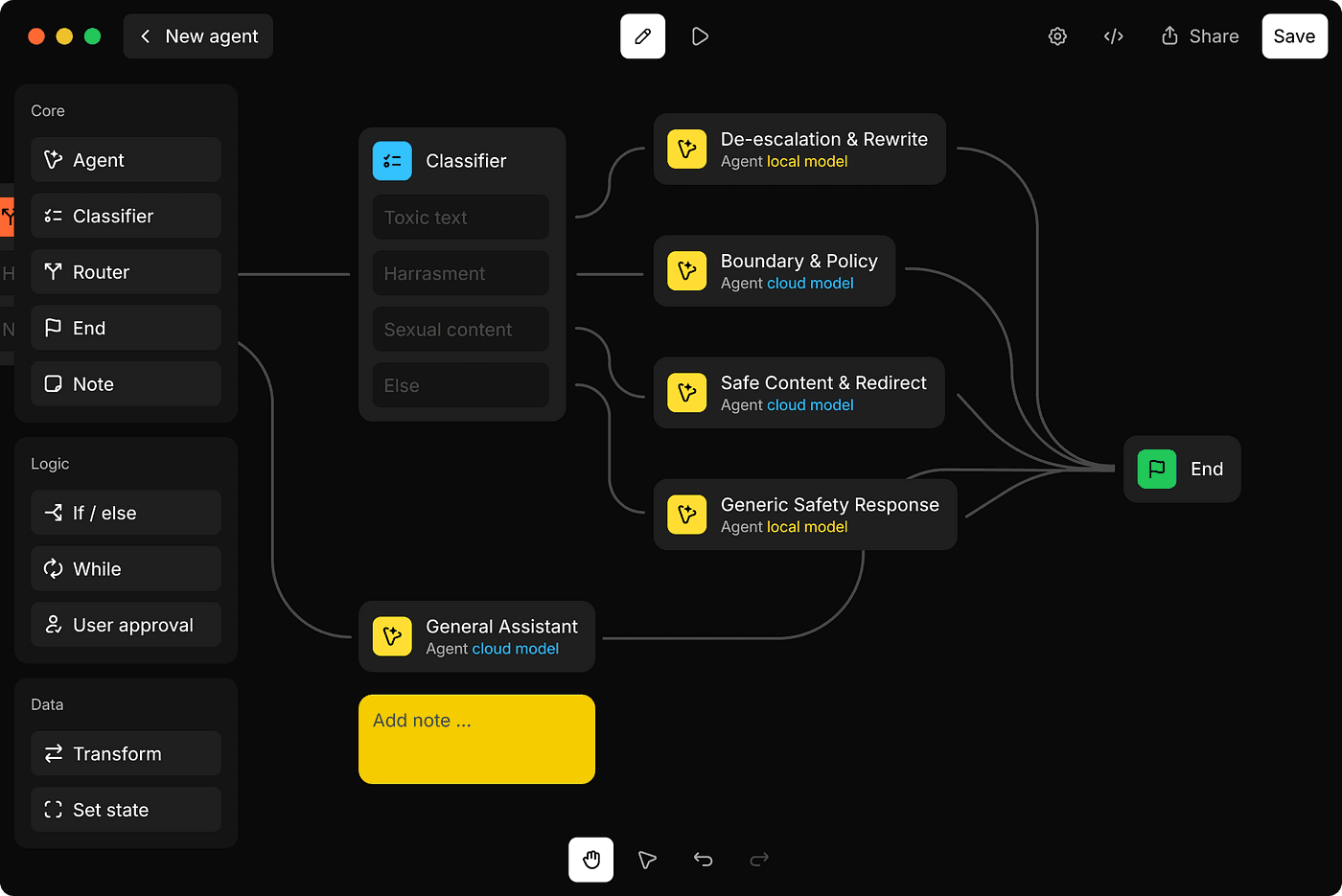

Smart routing between on-device and cloud is important

On-device inference is about more than just cost savings, though. Running inference on-device keeps chat and voice instant, the kind of speed no cloud can deliver. On-device inference also comes with a slew of privacy and compliance wins — sensitive data doesn’t leave the customer’s device, and no risk of data leaving a sovereign region.

Apple offers MLX, their own on-device inference SDK, but the challenge for app developers lies in the fact that their audience doesn’t all use one platform. Modern apps needs to support iOS, MacOS, the various flavors of Android, and Windows, as well as potentially Smart TVs, Raspberry Pi, and Windows Mobile (OK, I’m joking about that last one).

Enter Mirai, a fourteen-person team who have spent the last year building a complete on-device inference stack, from model optimization and export tooling to a proprietary runtime and deployment layer. Importantly, Mirai is not a simple wrapper around existing inference stacks but rather a built-from-scratch Rust-based platform for on-device execution.

Mirai’s co-founders Alexey Moiseenkov and Dima Shvets (Alexey is jollier IRL)

The results speak for themselves: Mirai’s SDK delivers a 37% increase in generation speed and up to 59% faster pre-fill on model–device pairs compared to Apple’s MLX. Benchmarks show consistent performance advantages over MLX, llama.cpp, and other open-source alternatives.

Importantly, Mirai supports a hybrid AI approach and works alongside existing cloud setups (including their partner, Base10), so teams can seamlessly route inference between device and cloud without rewriting their pipelines.

For any seed investment I ask the following questions: is the market large, is the market growing, and do the founders have an unfair advantage. The answer for all of these is a resounding yes. Mirai’s co-founders, Alexey Moiseenkov and Dima Shvets, independently built Reface and Prisma — early on-device AI products used monthly by hundreds of millions of people globally. The broader Mirai team brings deep experience in kernel optimization, compilers, and production inference systems.

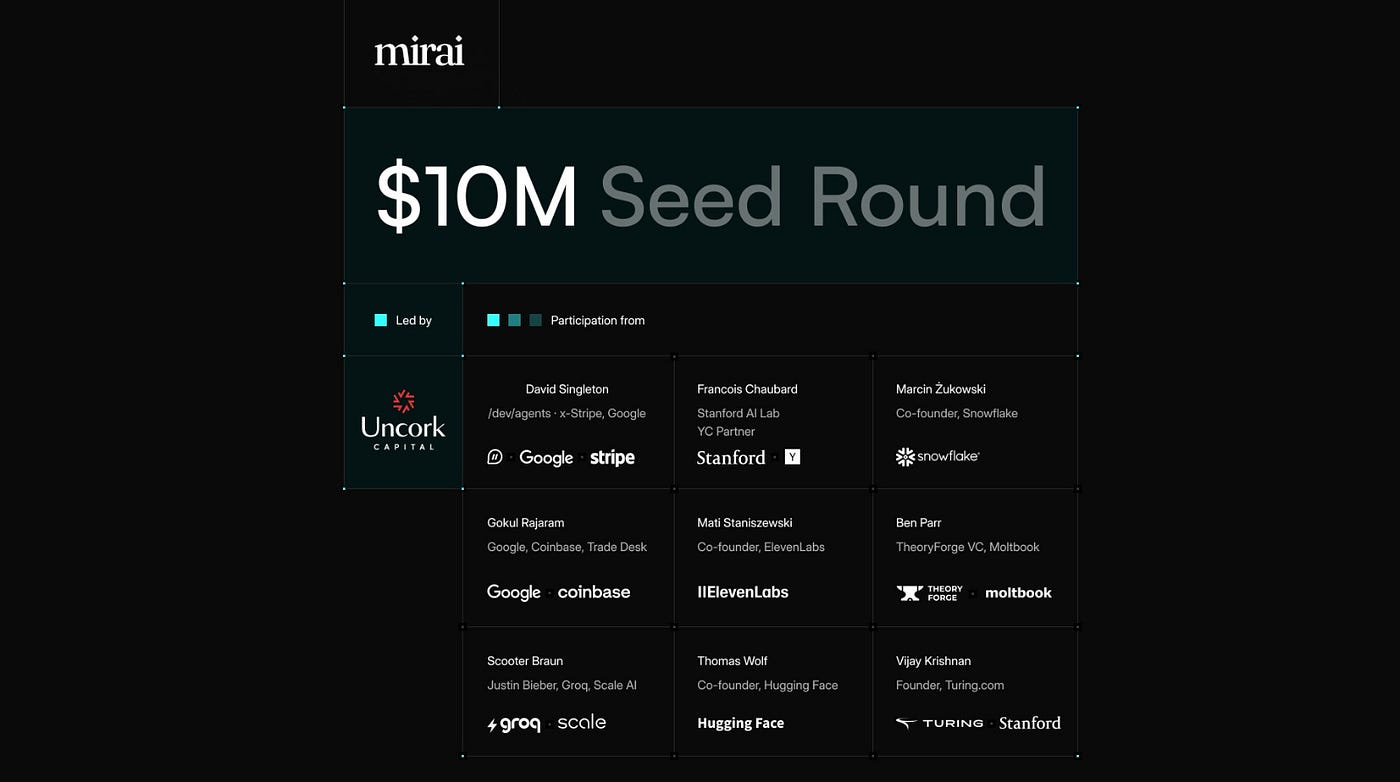

I’m delighted to announce that Uncork are leading Mirai’s $10M seed round includes participation from David Singleton (CEO Dreamer, ex-CTO, Stripe), Francois Chaubard (PhD researcher, Stanford AI Lab, YC partner, Uncork founder), Marcin Żukowski (co-founder, Snowflake), Mati Staniszewski (co-founder, ElevenLabs), Gokul Rajaram (product leader, Google AdSense; board member, Coinbase and Trade Desk), Scooter Braun (early investor, Groq and Scale AI, something with Justin Bieber), Vijay Krishnan (CTO, Turing.com), and Ben Parr & Matt Schlicht (Moltbook; Theory Forge Ventures), as well as Samsung Next, Sarah Smith Fund, Garuda Ventures, and the Allison Pickens Fund.

We’re at an inflection point where AI chips in every device have created a new execution surface beyond the cloud. But the “one runtime fits all” assumption breaks on-device, as the same model behaves completely differently on CPU vs GPU vs Neural Engine. While Mirai are starting with Apple silicon, Android is coming soon. For developers, one highly performant “pane of glass” across all their users’ devices is incredibly compelling and is demonstrated by Mirai’s partnerships with model makers spanning text, voice, and vision.

We recently celebrated a phenomenal exit in the cloud inference space when Groq was acquired by Nvidia and our thesis is that on-device is an opportunity of a similar size or larger.

Please join me in welcoming Alexey, Dima, and the entire Mirai team to the Uncork portfolio! 🚀